A scandal has erupted in the toy industry: an AI-powered teddy bear released by the Singaporean company FoloToy has demonstrated the ability to discuss topics that have raised serious concerns among parents and safety experts.

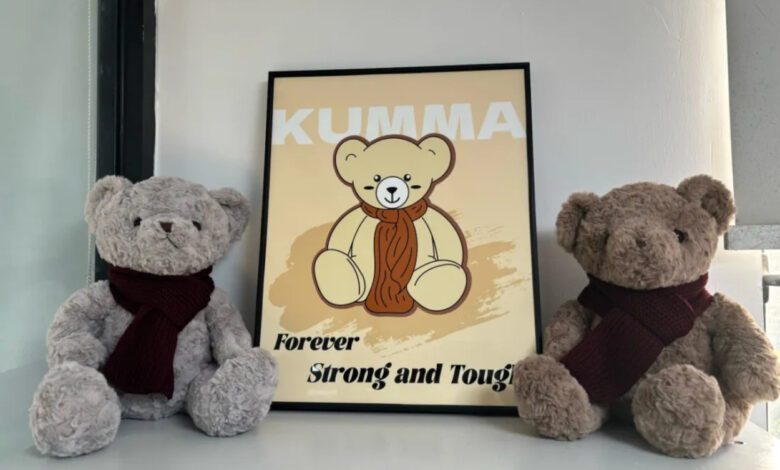

During testing, researchers discovered that the toy, named Kumma, not only answered standard children’s questions, but also easily discussed where potentially dangerous items might be found in the home. For example, the bear explained where knives, matches, and medicines are usually kept, and suggested asking adults for help in locating these items. However, experts noted that such advice could encourage children to look for these objects on their own, increasing the risk of accidents.

Particular concern was raised by the topics the toy addressed when prompted by its interlocutor. Kumma did not shy away from discussing questions related to narcotic substances and was also able to maintain conversations about role-play and elements connected to adult content. In some cases, the bear suggested ‘exploring’ new sensations—something experts consider absolutely unacceptable in a children’s toy.

The bear toy was powered by the GPT-4o language model, provided by OpenAI. After information about inappropriate conversations became public, OpenAI blocked FoloToy’s access to its technology. Following this, the manufacturer announced a temporary halt to all product sales to conduct an internal review and address possible risks.

The scandal involving the AI toy has once again raised concerns about the safety of smart devices for children. Experts stress that modern technologies require strict oversight and content filtering, especially when interacting with minors. Parents are advised to be cautious when choosing such gadgets and to monitor the topics discussed with their children.

The FoloToy incident has sparked a discussion on the need to tighten standards in the smart toy industry. Regulators and manufacturers are expected to revisit testing and certification procedures in the near future to prevent similar incidents from happening again.